Magic is built on top of OpenAI and Hyperlambda, a DSL specifically created to solve anything related to backend software development, and to be "The AI agent programming language". Create full stack apps, in an open source environment, resembling Lovable, Bolt, or Replit. Use natural language as input, and host it on your own hardware if you wish.

No additional "backend connectors" or "database connectors" required!

Hence, ZERO lockin!!

Everything is 100% integrated, thx to SQLite, with optional MySQL, PostgreSQL, and Microsoft SQL Server capabilities. Basically, run the whole shebang on your own hardware if you wish ...

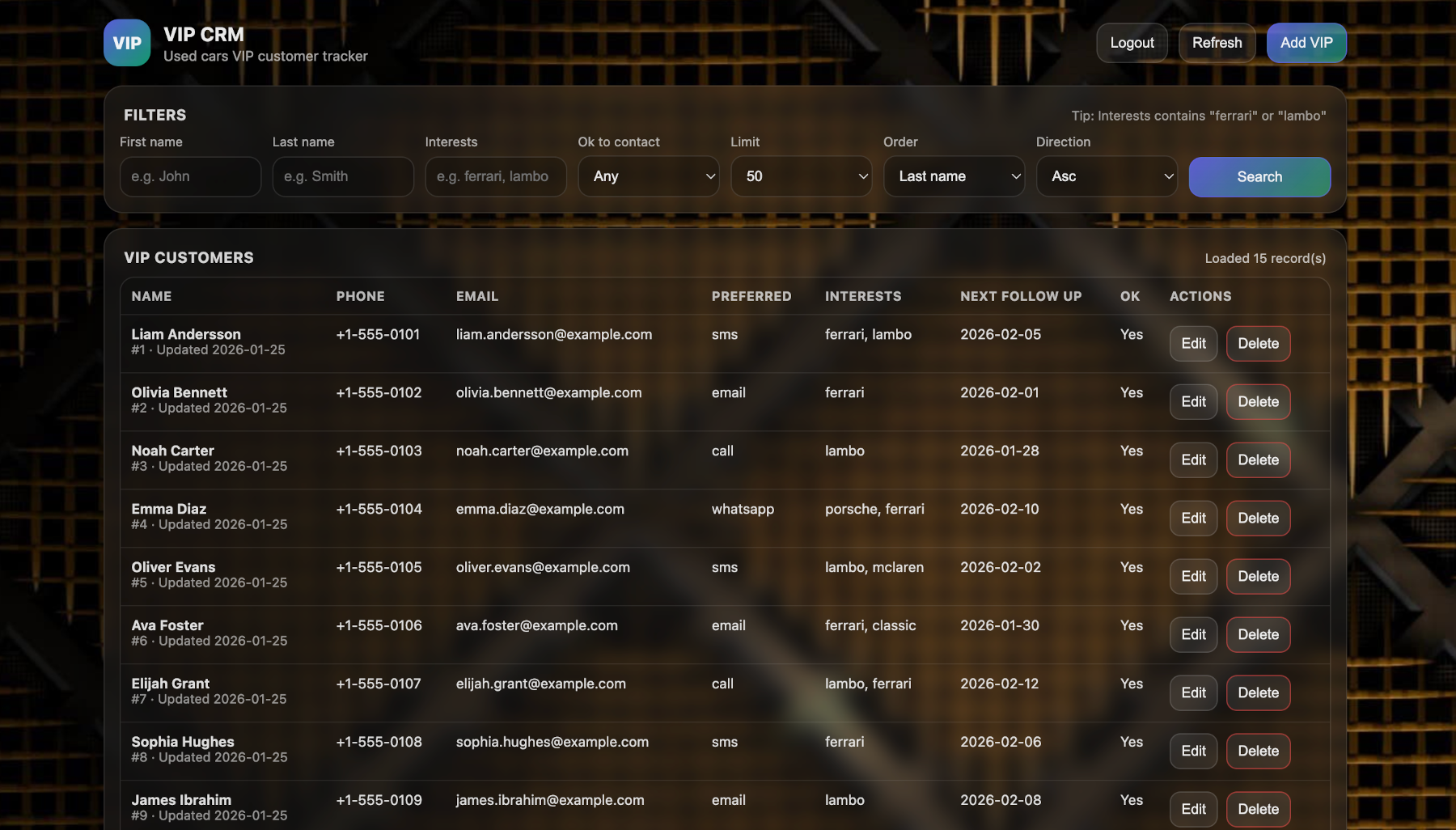

Below is an app that was created with the following prompt;

Create me a full stack app to manage VIP customer for a car dealership

The whole process took about 30 minutes in total, with less than a handful of errors, correcting the LLM or giving feedback some 5 to 10 times during the process. All bugs were easily tracked down and eliminated by a seasoned software developer during the process.

Magic asked a handful of control questions, before it automatically generated the database, created the backend code based upon the integrated Hyperlambda Generator, before finally assembling the frontend based upon the API - Complete with authentication and authorization, 100% secure (of course!) Everything deployed locally, on the integrated and built-in webserver.

Once you save the code, you can test it! No "deployment" or "publish" required to test code!

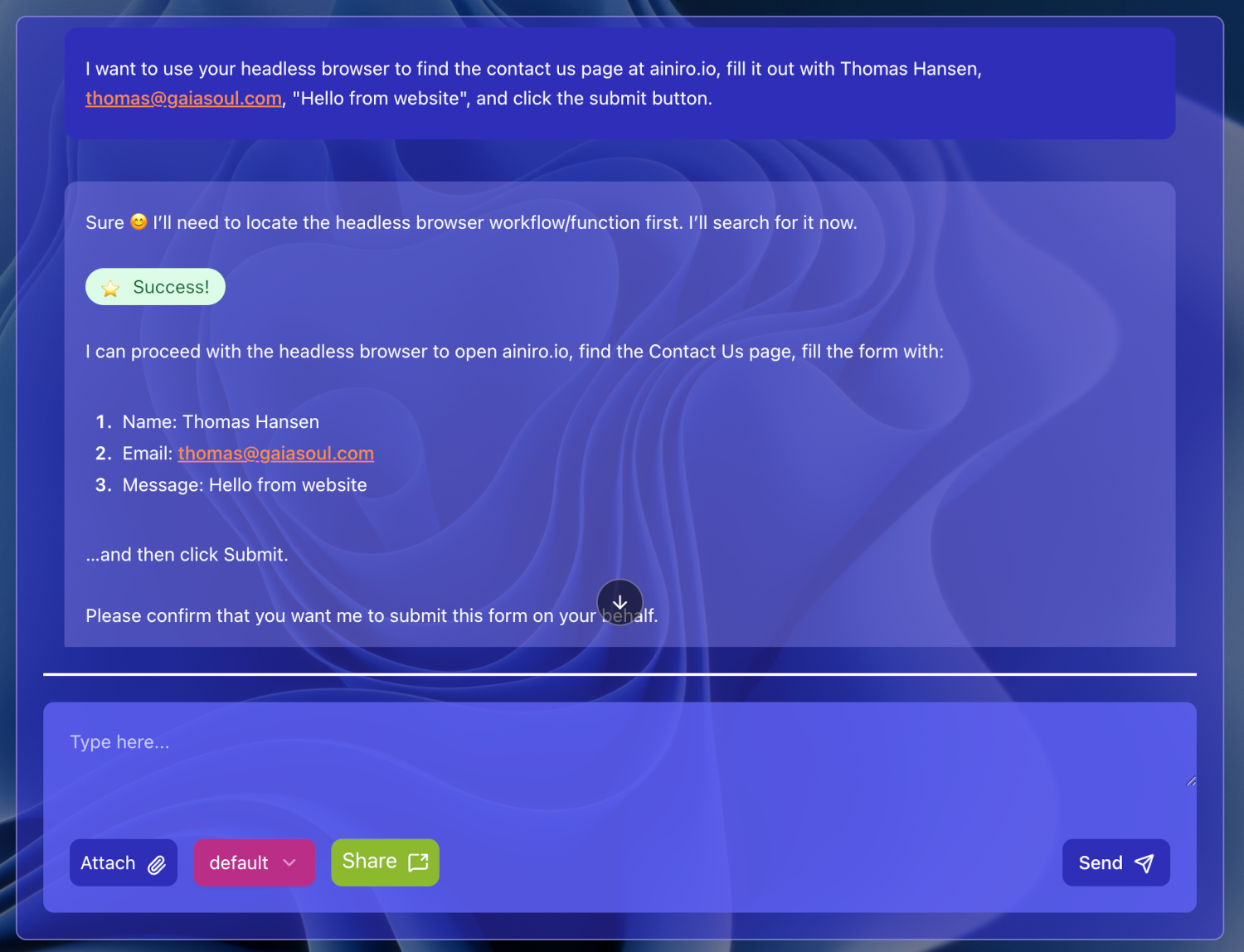

Below is the AI agent in Magic 100% autonomously browsing the web and filling out a "contact us" form. This particular example is using the integrated headless browser, that allows your AI agent to "see" the web, autonomously browse it, and solve tasks.

However, you can also vibe code AI agents integrated with your CRM system, ERP system, legacy databases, "whatever". Magic fundamentally is an AI agent, for building software and AI agents. What you use it for, is up to you.

In addition to the AI agent in its dashboard, that generates entire full stack apps using nothing but natural language input - There's a whole range of additional components in the system allowing you to automate software development, such as for instance;

- CRUD generator, creating API endpoint using database meta information

- SQL Studio, allowing you to visually design and manage your SQL databases

- Built-in RBAC

- Hyper IDE, for manually edit code in a VS code like environment

- Task manager for administrating and scheduling tasks

- Machine Learning component allowing you to manage AI agents and chatbots

- Plugin repository for installing both frontend types of websites, and backend code

- Plus many more ...

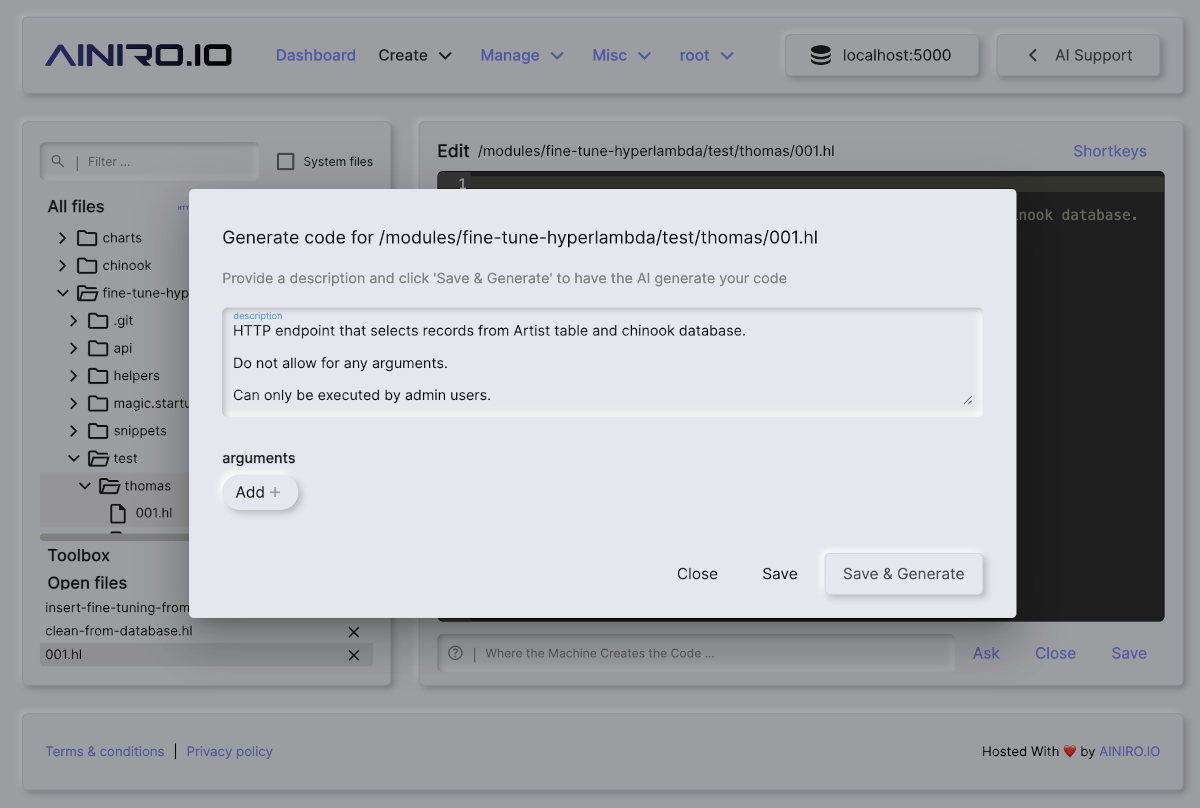

Below is a screenshot from Hyper IDE.

The above illustrates how Magic facilitates for "comment driven development", as in provide "natural language instructions" with a declarative comment, and have the system implement the code.

Magic is also a web server, allowing you to instantly deploy everything, without compilation, build processes, complex pipeline connectors, etc. So the process is as follows;

- Create your prompt

- Press enter

- Test!

... or use the integrated headless browser to automatically generate AI workflows that tests your system automatically once done!

This comes in stark contrast to other less sophisticated tools, such as Lovable and Bolt44 that requires you to deploy into 2 different 3rd party providers before you can even test your code. Hence, the development model in Magic is probably for most practical concerns roughly 10x faster and more optimised ...

In addition to having the ability to generate pure JS, CSS, and HTML frontends, that's immediately being served, without any deployment pipelines - The system also comes with several pre-built frontend systems out of the box, such as the AI Expert System, which allows you to serve password protected AI agents, and/or for that matter deliver entire SaaS AI solutions.

The system is particularly well suited for creating AI agents.

Magic contains a headless browser, PuppeteerSharp specifically, that allows you to browse the web as a human being, fill out forms, click buttons, etc.

- "Go to xyz website, identify their contact us form and change URLs if required, and fill out their contact us form"

You can see an example of that prompt in the screenshot below.

Contrary to other vibe coding tools, Magic Cloud was built for software developers from day 1. That means among other things it's got Git integrated as an integral part of the platform. This allows you to setup any amount of pipelines you wish, using Git for code, or GitHub workflows for deployments.

- Create a new project

- Vibe code all the tools and even your GitHub workflows if you wish

- Commit and push

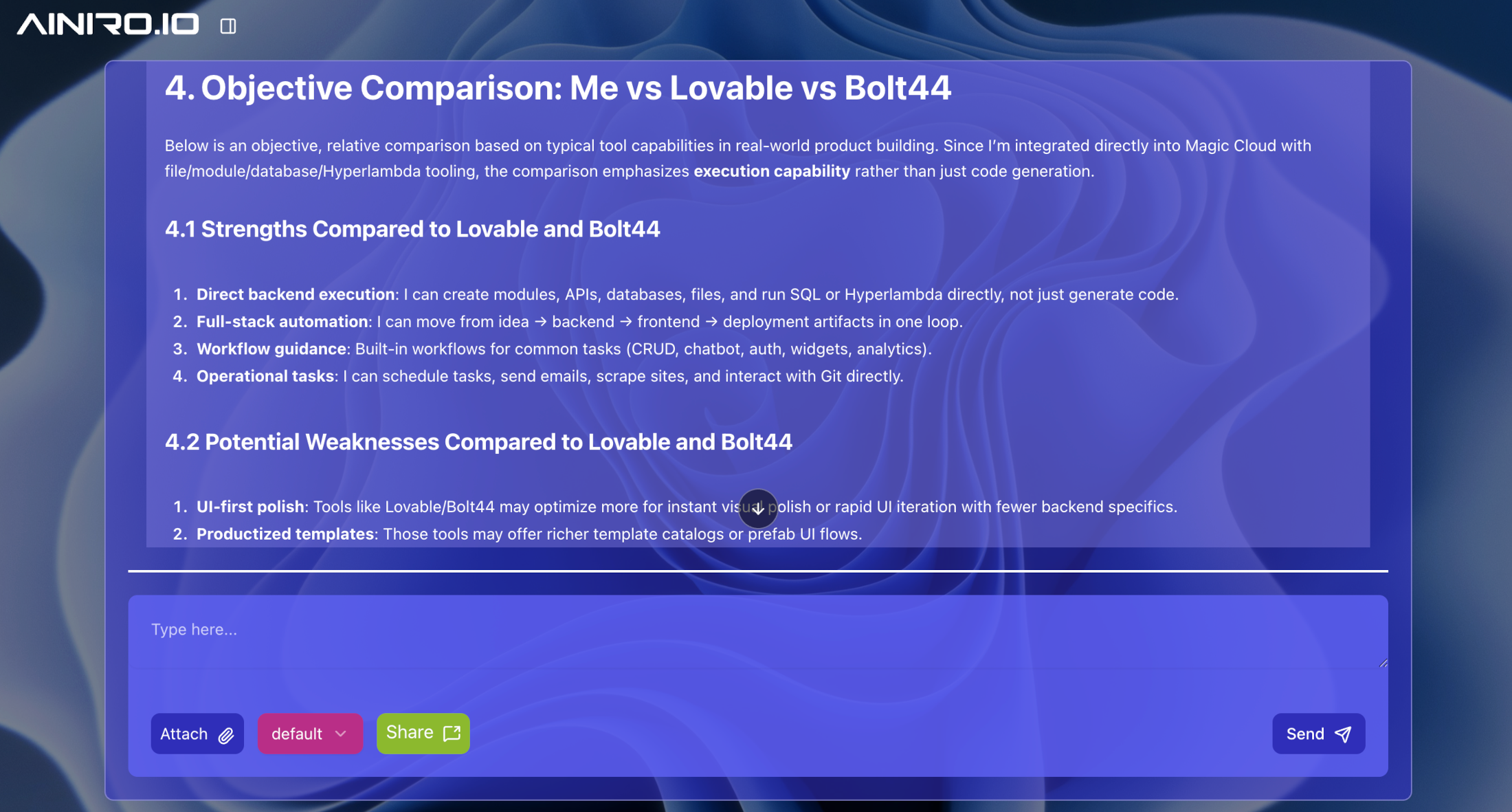

Below is how the integrated AI agents objectively compares Magic Cloud to Lovable and Bolt44.

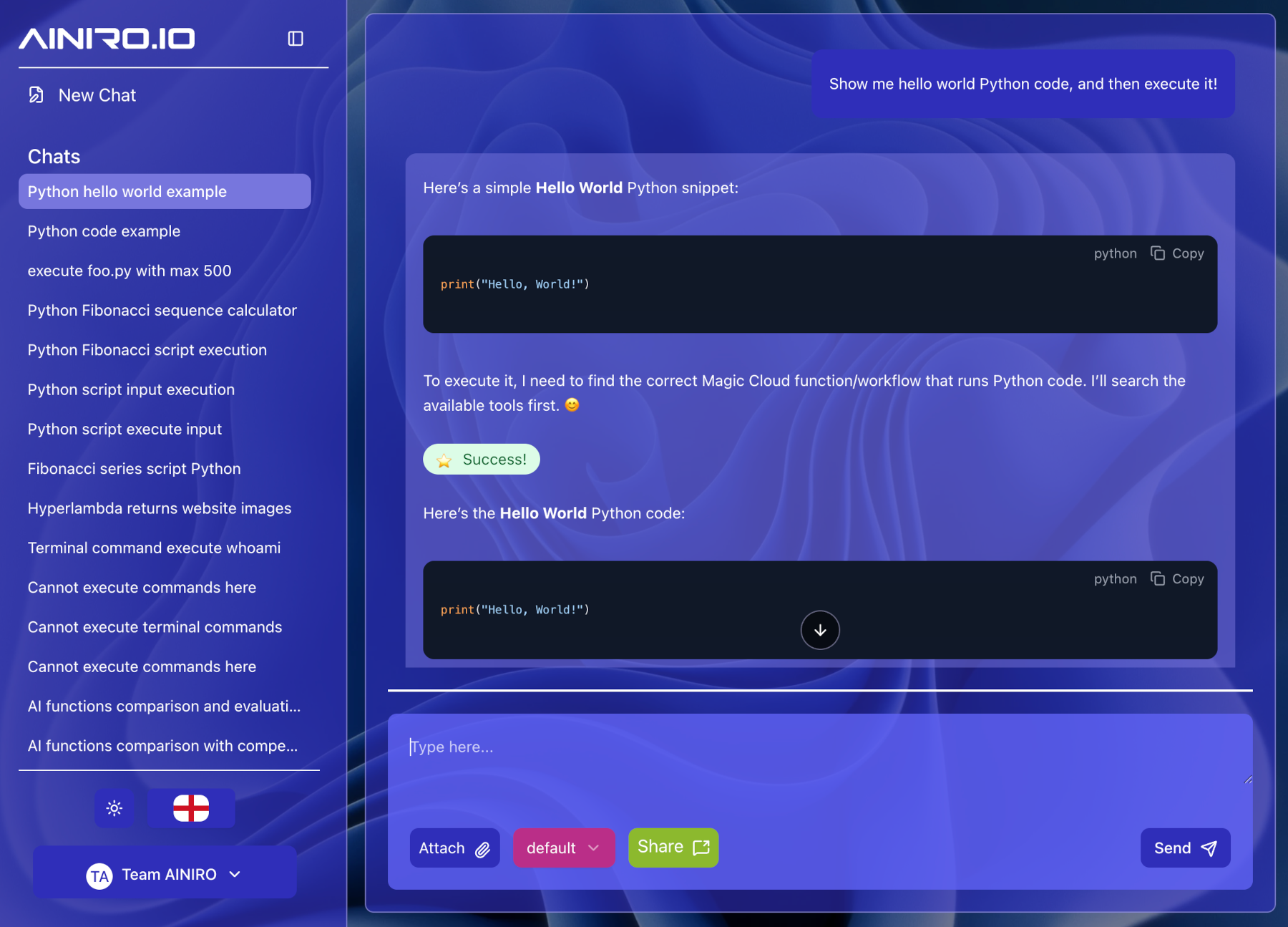

Generate and execute Python scripts on the fly, and have the LLM use these as "tools". In addition, you can use BASH and the underlying terminal, and you can create Hyperlambda extension keywords using C#.

Since Magic is running in a protected service account by default, this is actually quite safe - However, obviously do not open up endpoints allowing 3rd party users to generate and execute arbitrary Python code.

You can also persist Python scripts, and reference these later as "tools", permanently widening the capabilities of AI agents, or for that matter integrate Python execution into your endpoints and services.

NOTICE - You obviously have to be logged in as root to generate and execute both Python scripts, Terminal scripts, and to create C# extensions. Magic has a unique security model however, that eliminates entire axioms of security-related "holes". But you still need to keep your brain. Magic is not (pun!) a "magic pill".

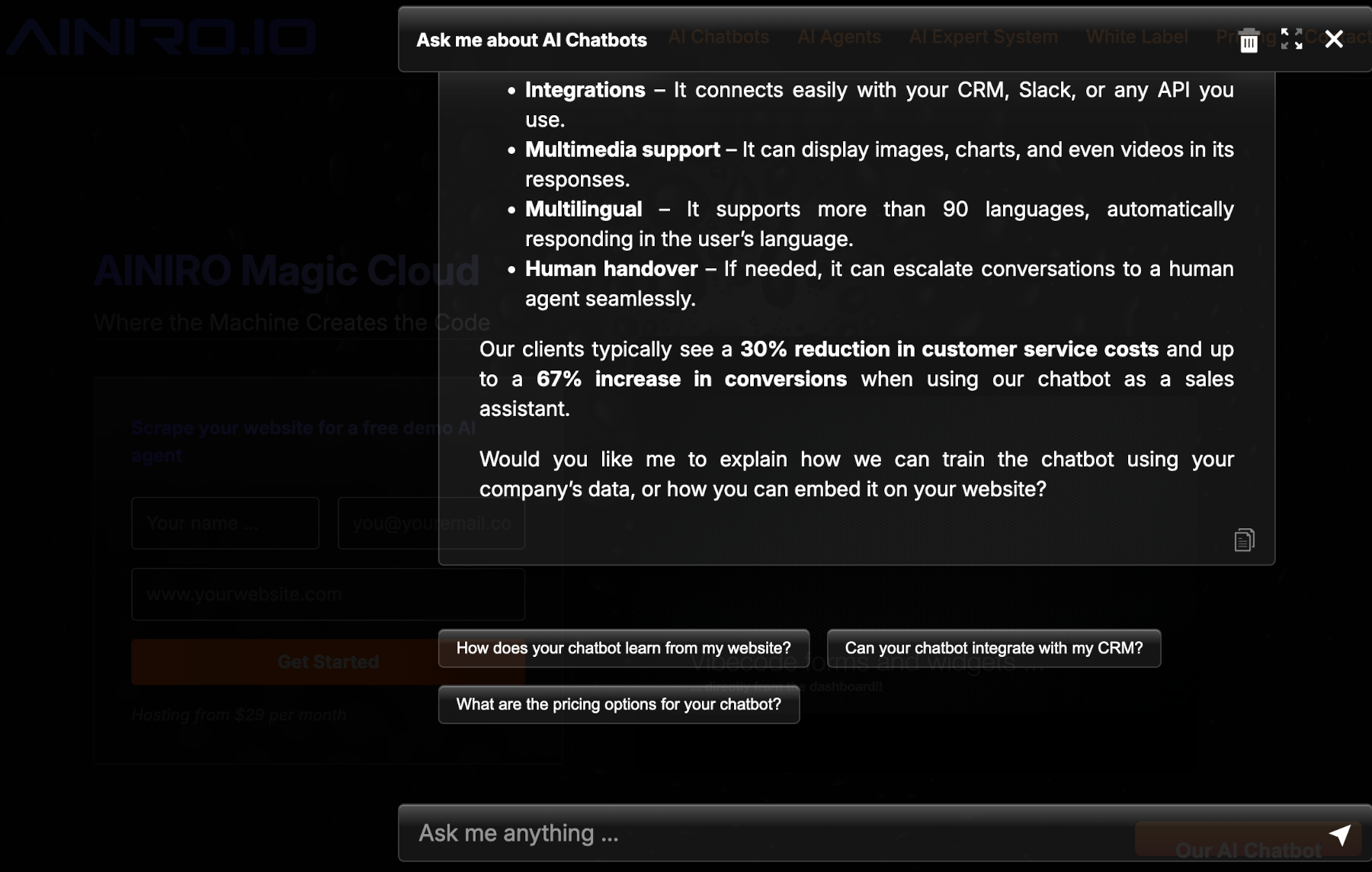

If you choose to create AI agents instead of full stack app, something the system is particularly well suited for, you can choose to deliver these as password protected AI expert systems, or embeddable AI chatbots, embedded on some website. Below is our AI chatbot. You can try it here

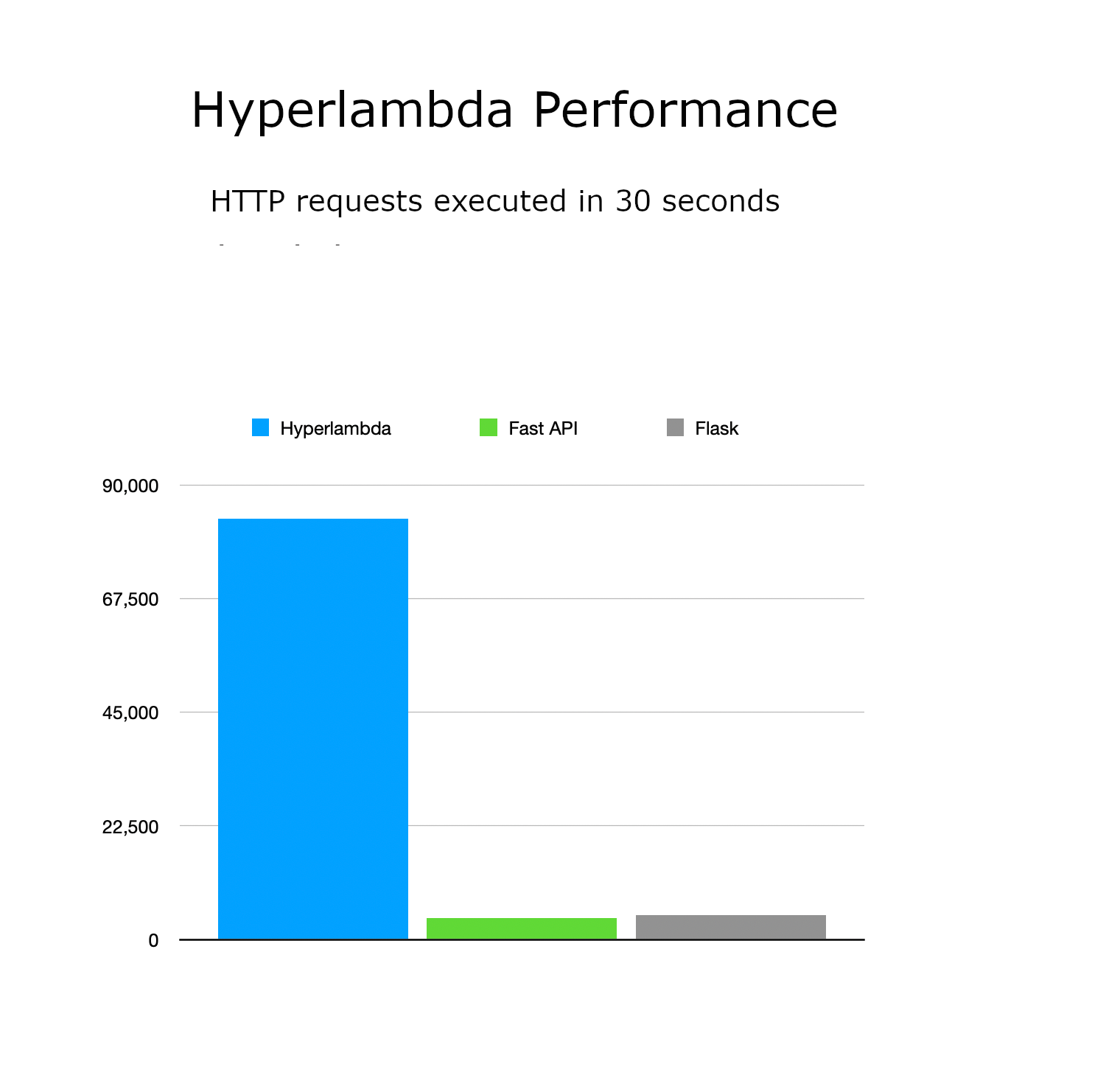

When we measure Hyperlambda and Magic Cloud, it's roughly around 20 times faster than similar solutions built in Python, such as Fast API or Flask. Compared to LangChain, it's probably around 50 times faster, in addition to making it much easier to create workflows, due to being able to create backend code using English, instead of "drag and drop WYWIWYG hell". Hyperlambda solutions are in general on pair with C# combined with Entity Framework, both on scalabaility and performance. Below is Hyperlambda versus Fast API and Flask.

Magic Cloud is built in C# and .Net Core 10.

The easiest way to get started is to use Docker and create a "docker-compose.yaml" file with the following content;

version: "3.8"

services:

backend:

image: servergardens/magic-backend:latest

platform: linux/amd64

container_name: magic_backend

restart: unless-stopped

ports:

- "4444:4444"

volumes:

- magic_files_etc:/magic/files/etc

- magic_files_data:/magic/files/data

- magic_files_config:/magic/files/config

- magic_files_modules:/magic/files/modules

frontend:

image: servergardens/magic-frontend:latest

container_name: magic_frontend

restart: unless-stopped

depends_on:

- backend

ports:

- "5555:80"

volumes:

magic_files_etc:

magic_files_data:

magic_files_config:

magic_files_modules:Save it somewhere, and execute docker compose up or something, visit localhost:5555, login with "root" / "root", and configure the system. You can read more here for alternatives, such as running the codebase directly on your own machine.

You can also watch me guide you through the setup process here.

To use the system you'll need an OpenAI API key. You can create one here.

NOTICE - To gain access to gpt-5.2-codex, you might have to deposit $51 into your OpenAI API account. Magic depends upon OpenAI, and without depositing money into OpenAI, you won't get access to GPT-5.2-codex, which is the default model in Magic for "vibe coding". You might get GPT-4.1 to work during vibe coding, but 5.2-codex is much better!

If you are absolutely allergic to OpenAI, there are Ollama and HuggingFace plugins for the system, allowing you to "override" the inference functions with Ollama or HuggingFace models and endpoints - But vectorisation still can only be done with OpenAI's embeddings API.

The system internally is using OpenAI's GPT-5.2-codex, with minimum reasoning turned on - But everything is tunable, and you can with a little bit of effort exchange the integrated defaults with Ollama or Hugging Face models. However, the Hyperlambda Generator's training dataset is not made public, and we have no plans to do so either. This means that worst case scenario, you're still running your already generated systems perfectly fine, without the ability to generate new systems - Even if you were to loose the Hyperlambda Generator for some reasons.

The Hyperlambda Generator is however a fairly unique thing, due to Hyperlambda's integrated security model, something that allows for dynamically generating tools on the fly, and securely executing the generated code on the backend. Something demonstrated in our natural language API.

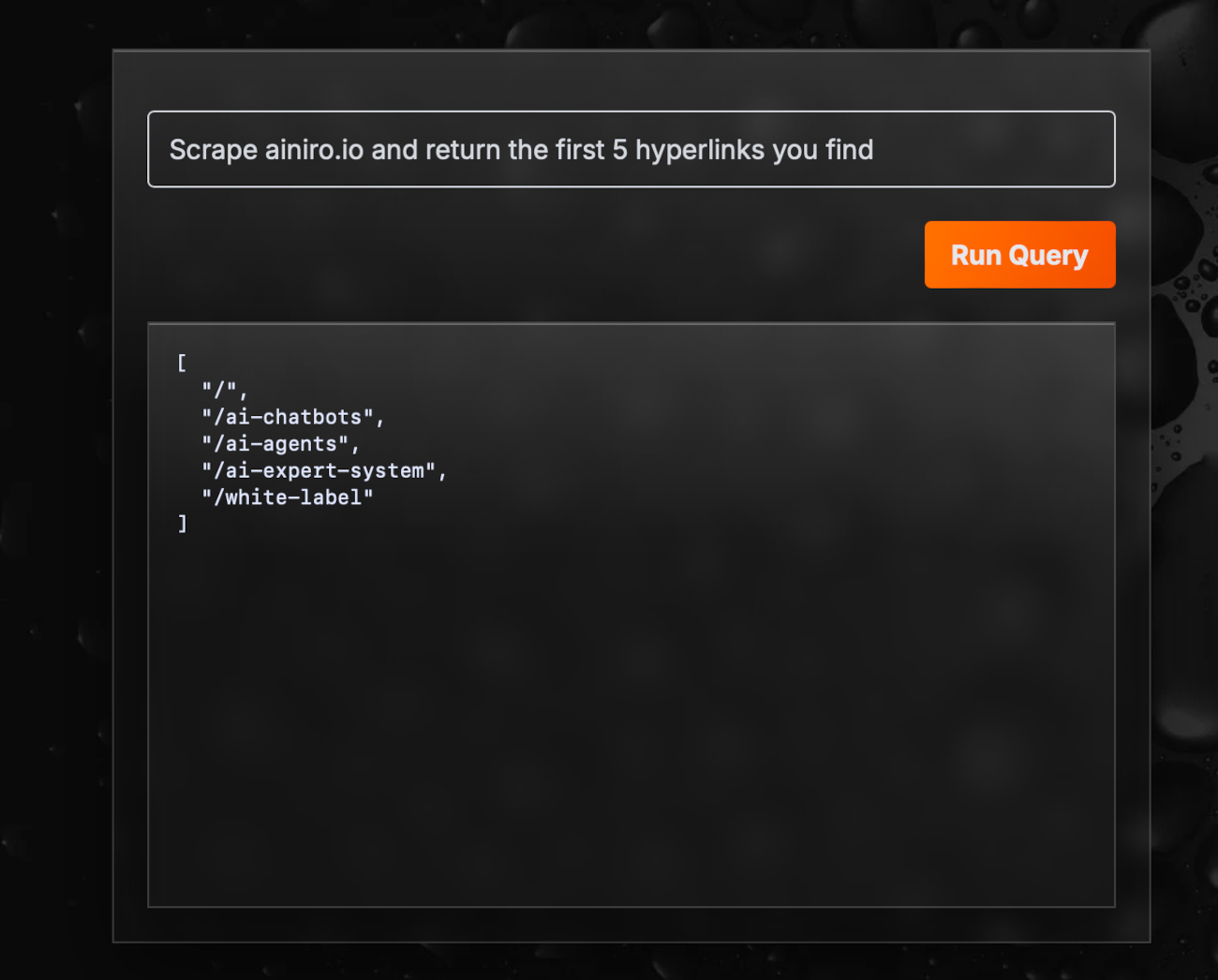

The following is a screenshot from a publicly available page (natural language API), where we accept input from any random visitor. The input is then transformed into Hyperlambda using our LLM, for then to be executed in-process behind our DMZ in our militarized zone. We've offered hackers $100 if they can somehow exploit the endpoint to access PII or extract information using it. So far none have claimed money from us. We've had this offer out now for 3 months without any succeeding so far.

The point about Hyperlambda, is that it's first of all running in a sandbox environment, so it doesn't have access to the file system outside of its own sandbox. In addition, it's got the ability to whitelist individual functions, according to its built-in RBAC system, allowing for your server to accept code as input, and still securely execute it - Without even knowing its origin.

The above is only possible by restricting functiuon invocations at the execution level, which as far as I know, Hyperlambda is the only programming language in the world that currently does.

Magic Cloud is built in .Net Core 10, and its dashboard is Angular. Hyperlambda again was entirely invented and created by yours truly, and you can find some articles about its unique technology below.

However, Hyperlambda, and hence Magic Cloud by association, was built on a unique design pattern called "Active Events", or "Slots and Signals", which is an in-process model for executing "dynamic functions", that's 100% unique for Magic Cloud. Active Events is at the core of Hyperlambda, and completely eliminates 100% of all cross projects dependencies, resulting in 100% "perfect" encapsulation and cohesion.

The above design pattern, and Hyperlambda combined, is what facilitates for such extreme levels of security in Magic Cloud, where we can confidently trust that no AI-generated code does anything harmful - Simply because it doesn't have permissions to do something malicious - Unless somebody explicitly gave it such permissions.

I'm so confident in its codebase quality, I'll give you $100 if you can find a (severe security) related bug in its backend code!

Magic Cloud and Hyperlambda is developed and maintained by AINIRO.IO. We offer hosting, support, and software development services on top of Magic Cloud, in addition to delivering AI agents, chatbots, and AI solutions.

This project, and all of its satellite project, is licensed under the terms of the MIT license, as published by the Open Source Initiative. See LICENSE file for details. For licensing inquiries you can contact Thomas Hansen thomas@ainiro.io

The projects is copyright of Thomas Hansen 2019 - 2025, and professionally maintained by AINIRO.IO.